AI-Enabled Teams

What they are and why they're different

Most companies give their teams AI tools and call it done. That's basic AI use—you might get a modest productivity bump.

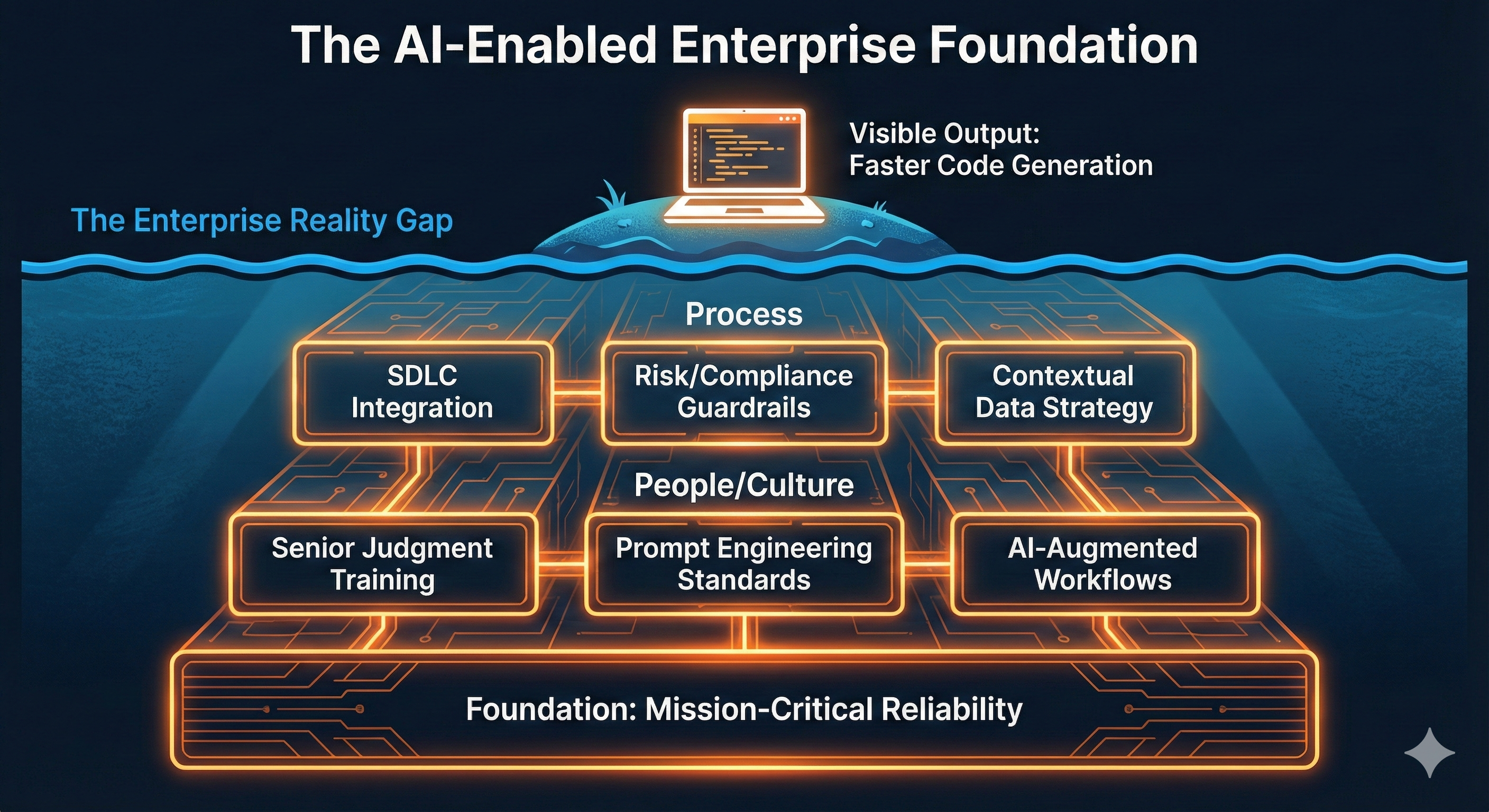

AI-enabled teams go deeper: structured training, integrated tooling, and refined processes across the entire software development lifecycle.

Basic AI Use vs. Fully Enabled Teams

The difference is in the approach

Basic AI Use

"We gave everyone an AI tool license." Ad-hoc prompting without structured workflows. Modest 10–20% productivity gains. Inconsistent results across the team. AI used mainly for code completion.

Fully Enabled Teams

Structured training and continuous improvement. AI integrated across the entire SDLC. Step-change improvements in speed and quality. Consistent, repeatable outcomes. Multiple LLM passes: generate, review, verify.

Principles of AI-Enabled Teams

How we think about AI in software development

Tool-Agnostic, Outcome-Obsessed

We design workflows with pluggable AI steps, not hard-wired to a single vendor. The best model for a task will change over time—our processes stay modular and swappable.

AI as a Pair-Programming Partner

Human sets intent, constraints, and quality thresholds. AI generates code, drafts, and alternatives at speed. Developers are editors and responsible engineers, not just prompt writers.

Start Early in the SDLC

Most orgs use AI for code completion. We start earlier: requirements clarification, design reviews, implementation planning. The highest leverage is before coding begins.

Humans Move Up the Value Chain

As AI handles more routine tasks, engineers shift to defining problems, evaluating solutions, integrating systems, and managing risk—higher-impact work.

AI Across the Software Development Lifecycle

How AI transforms each phase

Requirements

Feed product specs and domain docs to an LLM. It identifies ambiguities, proposes clarification questions, and suggests edge cases and non-functional requirements—before work begins.

Design

AI drafts markdown design documents: API surfaces, data models, sequence diagrams. A second LLM critiques for security concerns, scalability risks, and simpler alternatives.

Implementation Plan

Generate structured breakdowns with tasks, subtasks, ordering, and dependencies. Plans align with existing architecture, repositories, and conventions.

Code & Tests

AI writes tests first, then implementation code. A second AI performs code review, style checks, and coverage analysis before human review. Humans own decisions and final merges.

Operations

AI-assisted incident triage: summarize logs, metrics, and traces. Suggest likely root causes and remediation steps. Generate and maintain runbooks and operational documentation.

Agents & Tooling

How AI agents work in development workflows

Local tools for interactive development; remote agents for batch processing and parallelization. Choose based on latency needs and task complexity.

Use agents to work multiple tickets in parallel while maintaining consistency of patterns and architecture. Apply agents to tedious tasks like refactors and bulk API client generation.

MCP servers and tool ecosystems offer powerful capabilities but consume context. We often have agents write small pieces of code to call tools directly—reducing context pressure and giving explicit control.

Governance & Safety

AI doesn't replace testing, security reviews, or human judgment. It amplifies them.

Tests-First, Always

AI-generated code goes through the same automated checks as any other code—linting, formatting, coverage gates.

Human Review for Critical Decisions

AI suggests; humans decide and merge. Final accountability stays with engineers.

Quality Over Velocity

We measure defect rates, lead time, and documentation quality—not just lines of code or tickets closed.

Clear Data Handling

Approved toolchains with explicit policies on what data can be sent to external models. Self-hosted options for sensitive environments.

Works Within Your Enterprise

Our AI practices can adapt to your security standards, compliance requirements, and existing processes when needed.

What This Means for Clients

Practical benefits of working with AI-enabled teams

Faster Iteration Without Sacrificing Reliability

Ship features faster while maintaining high quality and test coverage. AI accelerates the path; human judgment ensures the destination is right.

Better Requirements Clarity Up Front

AI surfaces edge cases and ambiguities early in the process—before they become expensive mid-sprint discoveries or production incidents.

Clearer Documentation and Auditability

AI-generated design docs, decision records, and runbooks create structured artifacts that improve onboarding and satisfy regulated environments.

Higher Test Coverage and Consistency

AI-generated tests provide comprehensive coverage with consistent patterns. Tests are written first, not as an afterthought.

Ready to Work with an AI-Enabled Team?

Let's talk about bringing AI-enabled practices to your mission-critical systems.